Source of truth: Code, Spec, or Requirement?

When code becomes easy to produce, the hard part is remembering what we meant.

I keep thinking about this problem in agentic engineering.

What is the source of truth now?

For a long time, the practical answer was code. The code runs. The code breaks. The code is what production uses. Specs and docs can help, but they often become stale. So we learned not to trust them too much.

Code is honest in a way documents are not. It may be wrong, but it does exactly what it does.

With agentic coding, this becomes more complicated.

As an individual contributor, I can move incredibly fast now. I can open Claude Code or a similar tool, give it direction, and shape the project while it is being built. I may start with a rough spec or just an idea, but the real decisions often happen during implementation. I try something. I see the code. I realise the original idea was not quite right. I adjust. The agent tries another version.

This is not bad. Actually, it is one of the best parts of working this way. Implementation gives feedback. Sometimes the code teaches you what the spec should have been.

So code-first is very tempting. It keeps speed. It keeps flow. It works especially well when the person steering the agent has the whole picture in their head.

But that is also the problem.

In that case, the real source of truth is not the code and not the spec. It is the experienced person.

They know the edge cases. They know the dependencies. They know which interface is fragile. They know why some strange behaviour exists. They know when the generated code is technically correct but still wrong. They are continuously filling the gaps.

The agent is not really working from a complete spec. It is working with a human who carries the missing context.

This works while the system fits inside one brain.

But real systems do not stay like this. They grow. They get delegated. Teams split. People leave. New people join. Some engineers understand the product but not the architecture. Some understand the architecture but not the domain. Some are junior. Some are moving fast. And the agent only knows what we gave it, plus whatever it can infer.

At that point, memory becomes a bad source of truth.

We forget dependencies. We forget edge cases. We forget why something was built in a strange way. We forget which downstream system depends on a behaviour. Not because people are bad, but because we are humans.

Agents do not magically solve this. If something is not described, they will improvise. They will choose something plausible. And plausible is often enough to pass the first review.

The same is true for humans. If something is not specified, we should not expect it to behave exactly as we imagined.

This is where I think the word “spec” is not enough.

In many software teams, a spec is a temporary artifact. You write it to start the task. It helps the engineer or the agent. Then implementation happens, some things change, and the spec is effectively dead. Maybe it still exists in Notion, Linear, Jira, GitHub, or a markdown file. But nobody really trusts it six months later.

If the spec is temporary, of course code wins.

But maybe the better word is requirement.

And this is not a new idea. This is basically how regulated industries already work. In aerospace, medical, automotive, and similar places, requirements are normal. They can produce multi-hundred-page documents explaining the whole system, but usually it is not really one big document in the simple sense. It is a set of requirements, sub-requirements, interfaces, tests, evidence, and links. A graph that can be turned into a document when needed.

That difference matters.

A spec often says: here is what we want to build.

A requirement says: here is what the system must do, what it must not do, how we know it was implemented, and where the evidence is.

The requirement does not die when the task is done. The code implements it. The test proves it. The evidence is attached to it. If something changes, the requirement becomes part of the review again.

This is the part I think normal software engineering may need to borrow, but without copying all the heaviness.

Not because we suddenly want bureaucracy. I don’t. But because agentic engineering makes implementation faster, and the faster we implement, the easier it becomes to lose intent.

The dangerous drift is not always a bug. The code can work. The tests can pass. CI can be green. But the product intent may have moved a little. The domain behaviour may not be exactly right. A security assumption may be weaker. An interface may have changed in a way nobody noticed.

Everything is green, but it is green around slightly wrong intent.

I also do not think the answer is just “write a huge spec first.” That can fail too. A detailed spec can make wrong assumptions before reality pushes back. Then implementation starts, the spec is challenged, and now you have spec drift instead of code drift.

So for me the real question is not code-first or spec-first.

The real question is how we manage the drift between intent and implementation.

If the code does something different from the requirement, it should not silently become the new truth. But if the requirement is wrong, it should not block reality forever either. There should be a stop. The team should ask: is the code wrong, is the requirement wrong, or did we learn something?

Until that is resolved, something is broken in the process.

This is where traceability matters. Not as documentation for documentation’s sake, but as an invalidation mechanism.

If code changes, the related requirement and tests should become suspicious. If a test changes, the requirement should be checked. If a requirement changes, the code and tests should definitely be reviewed. The system should say: this thing changed, so these other things are no longer fully trusted.

This is also why evidence matters.

I do not trust humans. I do not trust AI either. I want the evidence.

A green CI run is useful, but evidence of what? That the code passes current tests? Or that the system still matches the original intent?

Those are not the same thing.

In larger projects, the original intent becomes a spec, then tasks, then subtasks, then test cases. Every step narrows the focus. Everyone implements their piece. Everyone tests their piece. Locally, everything can look correct. But who validates the final system against the original intent?

Usually not systematically.

This is the part I want to think more about. Maybe requirement management, in some lighter and more modern form, becomes much more important for normal software teams. Not because requirements are new, but because agentic development changes the cost of not having them.

Code is still the runtime truth. It tells us what the system does.

But requirement is the intent truth. It tells us what the system is supposed to mean.

And evidence is what connects them.

I do not have the full answer yet. I still do not know how detailed requirements should be before they become harmful. I do not know which parts should be formal and which should stay flexible. I do not know how to make traceability work inside Git, PRs, CI, and agentic coding tools without making everyone hate it.

But I think the direction is becoming clearer.

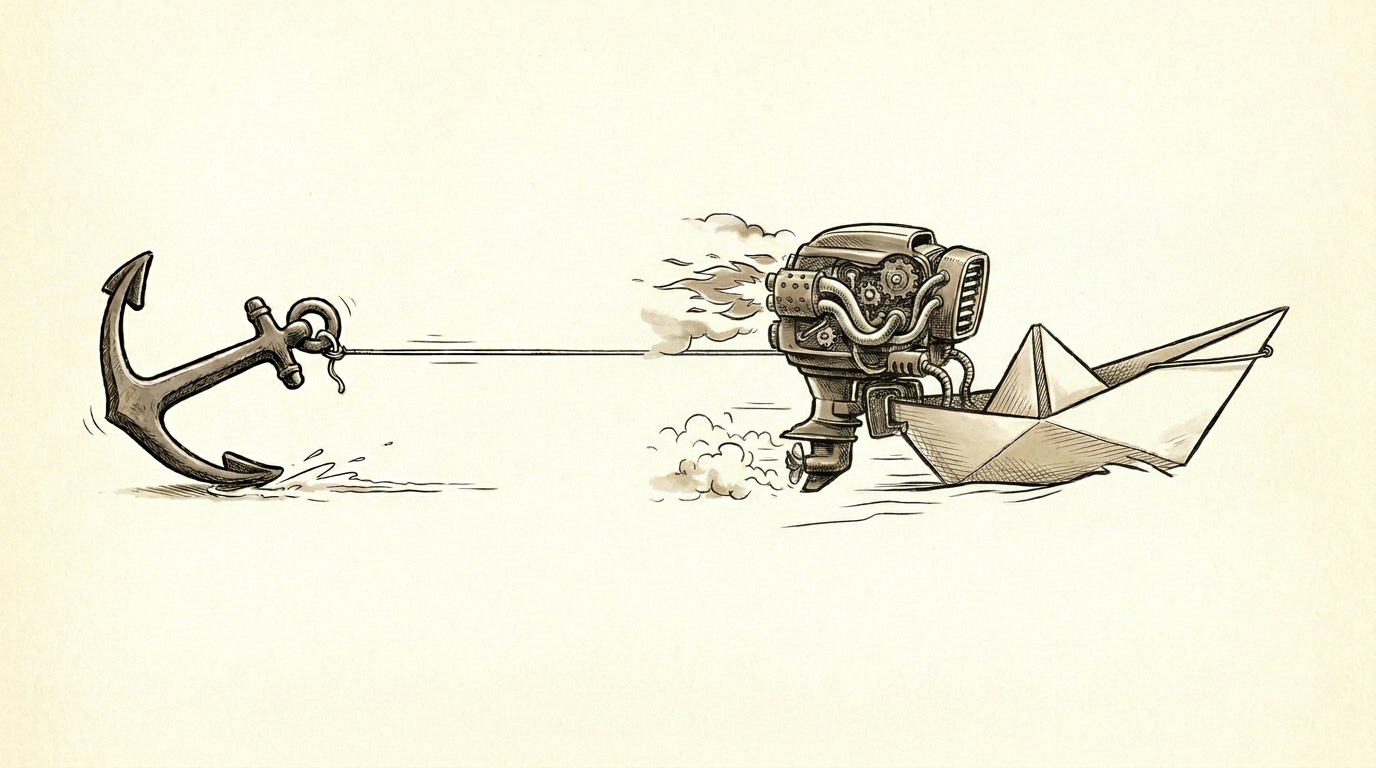

In the old world, code was expensive to produce, so code naturally became the main asset. In the agentic world, code may become cheaper to produce, but intent becomes easier to lose.

And if intent is the thing we can lose, maybe intent is the thing we need to manage much more seriously.